Anyone who lived during the 1980s, the last decade of the Cold War, has felt in their own flesh what it means to be concerned about an imminent war based on sophisticated and powerful missiles between the United States and the Soviet Union. During the presidential term of Ronald Reagan (1981-1989) the US achieved military supremacy over its enemy by a large investment in military technology, paying the price of an impoverished country with great social inequality.

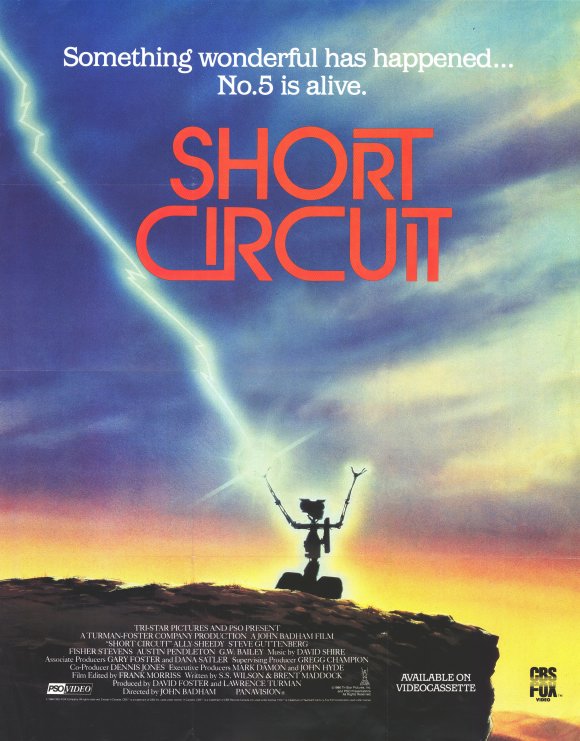

Short Circuit (John Badham, 1986) is shot in this context. It is a story about the military application of robotics that, despite being a comedy, questions the investment of valuable resources in military applications. Howard Marner (Austin Pendlton) is a brilliant scientist who works for the robotics division of a large corporation: NOVA. He is aware that the government will buy them robots designed to enter enemy territory in a safe way for soldiers and once there, attack with all kinds of weapons, including bombs. This scientist, seduced by a dark pragmatism has its antithesis in Newton Crosby (Steve Gutenberg), another scientist working at the company who hates the military application of his work.

The foolishness of the euphemism “keep the peace” through the manufacture and use of weapons is emphasized throughout the script, especially through the unfriendly and histrionic character Skroeder (GW Bailey), an army officer who also works in the development of military applications of robot soldiers.

With this scenario five brand-new units of SAINT (Strategic Artificially Intelligent Nuclear Transport) robots are presented. From the beginning of the film the great destructive power of these robots, perfect for the interests of the company in the context of war, is shown.

However, a sudden and unpredictable event takes place: one of the units connected to a generator, receives a powerful electric shock and, like some kind of metallic Frankenstein,it is given the gift of life and ignores the destructive programming built in by its creators during its manufacture.

This unit, number five, puts its creators in an awkward situation: with an arsenal of weapons, including a laser trigger, it leaves the premises at NOVA, with the consequent risk of causing great personal and material damage in a peaceful environment for which the company, as the owner of the device, would have to take responsibility.

Nevertheless, from the point of view of the robot, there is no law that can protect him, and therefore, the now peaceful robot must flee to save his own skin, that is, to avoid being captured and dismantled. Today there is hardly any relevant legislation apart from regulations on industrial safety and manufacturing standards. However, there is a growing interest in developing new laws for new technological scenarios that are drawing closer through the development of robotics.

South Korea is the country that has developed more legislation in this regard, with the Korean law of the development and distribution of intelligent robots from 2005 and the Legal Regulation of autonomous systems in South Korea from 2012. Japan, as the largest world power in robotics, has also developed a series of guides, the latest of which was approved in 2015. Meanwhile, the European Union is developing a draft bill, without any legal force, called Regulating Robotics: a Challenge for Europe.

One of the objectives of these regulations is to classify the devices at different levels:

Level 1. Programmed Intelligent Systems

Level 2. Non-Autonomous Robots

Level 3. Autonomous Robots

Level 4. Artificial Intelligences

No development from the point of view of the robot is expected from these regulations, but rather the establishment of security measures, responsibilities and quality standards of machines, in the development of which a large number of technology sectors are involved.

The case brought by Short Circuit, that a robot is liable to have rights, is totally beyond the legislation that is being developed at present, as robotics, from a practical point of view, aims to build machines that help us and make our life easier, not living beings.

Even not considering the robot number five as a living being, or at least worthy of certain rights, its creators choose its destruction at all costs without at least trying to understand what is the process by which the machine is self-aware and, best of all and which also makes it less dangerous: why the robot is not governed by the programming designed for war with which it was created.

We should not forget that whatever robots are like in the future, they will be a product of humans. Just like wars.

Translated by Olga Lledó Oliver

Leave A Comment